TL;DR: The core SFDC CRM platform is an under-utilized way to build and provision robust, feature-rich, and cost-efficient apps with an appealing UX using mature low-code/no-code development.

Salesforce has grown from the $110m CRM ticker symbol established in 2004 to the over $150b1 best-in-class SaaS and PaaS leader it is today partly by continuously adding valuable capabilities organically and through strategic acquisitions. While all of the acquisitions have led to a broad suite of products, this article is focused on the under-utilized capability to provision apps using the core CRM platform.

A key factor to Salesforce’s popularity is the ability to customize how its products are used through configuration and custom coding. Its customers take advantage of this to manage the sales and support2 processes that make them stand out. Salesforce partners use this malleability to provide value-add functionality such as contract management, recruiting, specialized industry practices, etc., integrated into the Salesforce CRM platform. There is also a huge ecosystem of OEM ISV vendors that build solutions on top of the Salesforce CRM platform under their own names through licensing agreements. Most of these partner solutions have recognizable CRM process as part of their core offering or are immediately contingent to CRM processes.

But there is a great deal more that can be done with the flexibility provided by the Salesforce CRM platform than just CRM and its directly related processes. What has made the Salesforce platform so flexible is features such as Lightning Web Components, Lightning Pages, Flows, Connected Apps, and Platform Events. These capabilities make it very easy to create low-code/no-code (LCNC) apps that can be used by anyone in an enterprise with no knowledge of Salesforce or need to interact with CRM-specific functionality. Add to this the ability to create custom objects and develop custom functionality with APEX and SOQL and you have all the tools necessary to build applications that can do just about anything.

Does this sound familiar?

Let’s look at a common workflow for all businesses: an approval process. One person sends a request to another person for approval. In some cases, the first approver needs to get other approvals, or they may delegate the approval to someone else, or a series of approvers are necessary. Once approved, there is generally a completion action, such as being granted access to some application or having an expense reimbursed or a supply item ordered. Some of you may be surprised, even shocked, to know that there are organizations where that entire flow would be managed through email. Others will be wondering if there is any other way (we all have viewpoints based on our experiences).

For the folks who are surprised that such processes still happen over email, you may not be surprised that even processes managed through an application stall out because someone was on vacation or missed the notification to perform their task in the process, or someone clicked the wrong option and the flow terminated before completing with no notification to anyone. And even if all of that is a surprise, you still may start nodding your head to hear that the process is often accessed through an unappealing and inconvenient UI that’s difficult to understand without someone walking you through all of the steps. And if you are still thinking “no, my company has all that covered,” there is still the possibility that the perfectly working systems must be manually updated every 6 to 24 months because of some change in the vendor software it runs on. At this point, the hold-outs may very likely already be doing it with Salesforce.

Same SaaS, different view

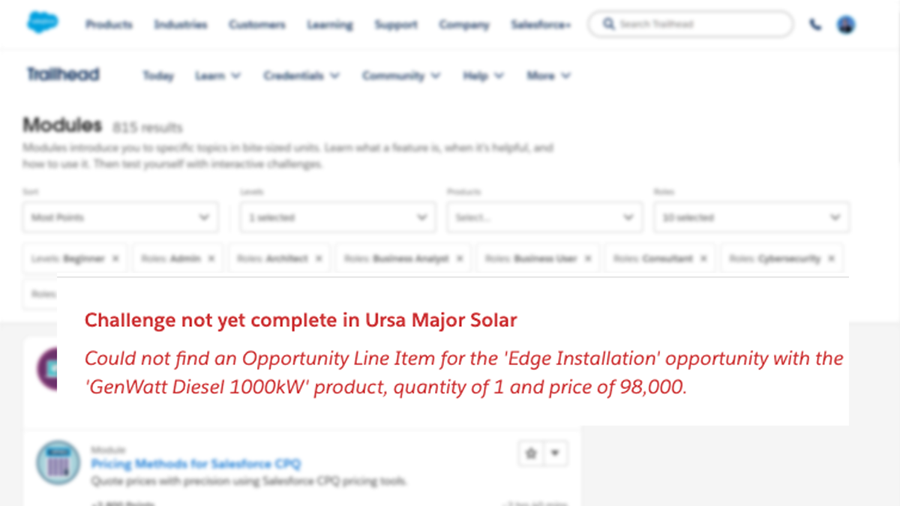

This is not a new observation. There have been apps in the AppExchange for many years that provide solutions for non-CRM activities such as project management, employee onboarding, expense approvals, and asset procurement. Most major vendors provide an app to integrate their product with Salesforce. Yet enterprises themselves seldom take advantage of these capabilities with their own app development and management. One likely cause is the confusion around licensing. Users of specialized apps frequently do not require a full standard user license to be able to use an app deployed in Salesforce. There are many lower-cost licenses, the exact cost depending on an enterprise’s ability to negotiate based on other uses. Salesforce may be missing out on a lot of revenue in making these options difficult to reference and understand.

Even with a discounted license, the platform cost may be higher than other common options. Businesses that have a large license footprint with Microsoft or Oracle have app platforms available that, on the surface, may appear as more attractive alternatives.

While Salesforce is the most mature SaaS offering available (of the many articles that note SFDC as the first-ever SaaS provider, this article on Medium has the fewest ads or other self-promotion), enterprise software vendors such as Microsoft, CA Associates, and Oracle spend a lot of time, money (yours!), and effort convincing you to use their tools for everything. They have to push their offerings for a few reasons. First, while the companies themselves have been in business longer, most of their app offerings are either the result of an acquisition or a new option built to keep competition at bay. The tools themselves often have a negligible developer community, and there is no guarantee that an update in the next release will render a solution relying on them as unusable without extensive re-work.

Another reason major vendors provide low- and no-cost options for apps is they can make them easier to integrate with their own products. In some cases, the apps only integrate with their own products. This makes their options appear to be low-hanging fruit, though the ability to build something even marginally complex may require expensive training or consulting, and still require a migration to a future version or tool to maintain support.

Know the cost and the value of your options

Finally (for this article), most of the lower-cost tools from major enterprise software vendors have poor user interaction capabilities without extensive customization (and some do not even provide a customization option). Assuming the development cost was not higher (it usually is), how much value is the enterprise realizing from the lower license cost if users either don’t use the app or if using the app is more complicated than the manual processes they were using before?

While it is possible for some of these issues to be experienced using the Salesforce CRM platform, it is only because any tool can be used below its potential. Salesforce communicates potentially platform-breaking updates to every admin months in advance, and provides clear direction on how to address the changes. In some cases, they even create tools that will make the updates for you. There are thriving and growing communities for users, developers, and architects, plus active groups discussing specific features.

It is true that most solutions built to deploy as an app on Salesforce will only run on Salesforce, as with most other vendors. Salesforce does, however, make it very easy to integrate with other platforms where other vendors frequently only provide a similar level of integration ease with their own products.

If you build it, they will run

Distribution of apps can be managed in multiple ways, depending on the size and complexity of the app and the organization. Orgs with small development and administration teams can continue to be successful with change sets by following Change Sets Best Practices. Teams using DX can manage the apps as Unlocked Packages, and vendors can distribute the apps as always through App Exchange while gaining some customer support points by pointing admins to the lower cost license options.

If your organization is already a Salesforce CRM (Sales Cloud or Service Cloud) customer, weigh the options of using that same platform to build and distribute your next app on a mature, robust, scalable, and reliable platform for a lower TCO (even if you have consultants build it for you).

Notes

1 $163b as of this writing

2 and marketing, and analytics, and integration, and… but that’s a different topic.

* Title is my musical alternative title for this post originally published as SFDC PaaS beyond CRM which could also be SaaS, PaaS, or gas, everyone wants the best price 🙂

© Scott S. Nelson