I co-authored this eBook for Logic20/20 this year.

Get your copy at https://logic2020.com/insight/salesforce-center-of-excellence-white-paper-download/ for the price of your email address.

© Scott S. Nelson

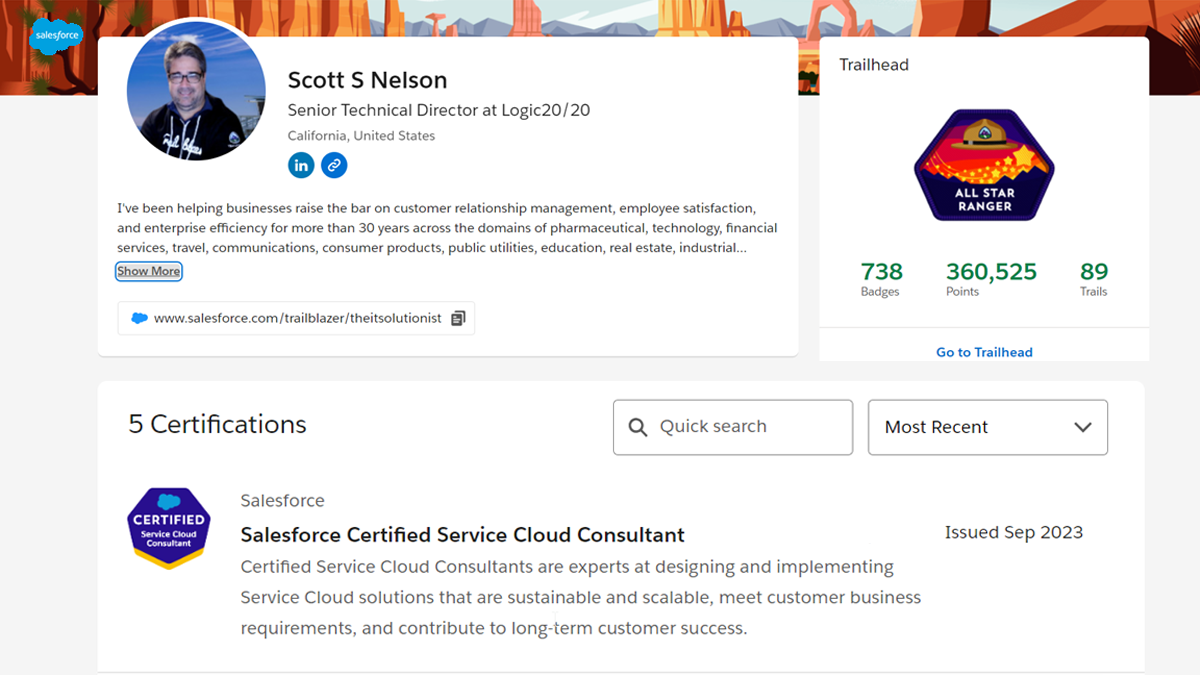

I’ve written about the process I have gone through for all of my Salesforce certifications. The Certification Prep section of my blog currently starts with these, and I believe that many of those posts also have some helpful tips for the Service Cloud Consultant Certification. If you haven’t already passed the Administrator certification, I would suggest starting with my Tips to Pass the Salesforce.com Administrator Certification Exam post. Enough self-promotion, on with the sharing!

As mentioned earlier, this isn’t my first post on certification approaches and if you are preparing for Service Cloud Consultant certification it isn’t your first exam, so I’m going to minimize the elocution here and just drop my formatted notes by section headings for easier reference.

The proscribed place to prep, completing the Service Cloud Specialist Superbadge will have you well prepared for a passing grade if you work all of the prerequisites and then the Superbadge itself. I did complete the prerequisites but have not yet done the Superbadge project. This is much more a comment on my other time commitments than the approach as I highly recommend completing the Superbadge project, preferably right before taking the exam.

If you also have a reason to not be able to fit the Superbadge into your preparation plan, I recommend completing the Get Started with Service Cloud for Lightning Experience trail. Some of the trail modules are part of the Superbadge prerequisites, so it will take less time than you might think.

Quizlet is a great free resource for some exams, and the Service Cloud Consultant is one of them. https://quizlet.com/272794451/salesforce-service-cloud-consultant-flash-cards/

I’ve used Udemy to prepare for every Salesforce certification, and have already enrolled in the Salesforce Data Architect Course for my next planned exam because it was on sale. For those who haven’t used Udemy before, they have frequent sales where the prices are drastically below their regular price. By signing up for their marketing notifications you will eventually get a feel for how low particular courses can drop to, so if you have some planned, buying on sale is a great strategy.

Returning from that digression (my regular reader is used to this), I enrolled in the Salesforce Service Cloud Consultant Certification Course by Mike Wheeler because I had previously taken his Platform App Builder course (as mentioned in Become a Salesforce Certified Platform App Builder) and found it helpful in preparation. I will admit I was disappointed with the Service Cloud course. It was recorded in 2018, and while the Udemy listing says it was last updated 11/22, I couldn’t see where. He continually points out Lightning issues that have long since been addressed and spends a lot of time in Salesforce Classic, which is no longer referenced in the exam. And, while the functionality of Live Agent changed very little when being re-branded to Salesforce Chat, there are a couple of questions in the exam where the Live Agent option is the wrong answer.

And, for the record, I do not get a commission if you enroll in a Udemy course I recommend…and not for lack of trying. Their affiliate program has too much friction to bother dealing with (and it is reflected in my losses as a shareholder).

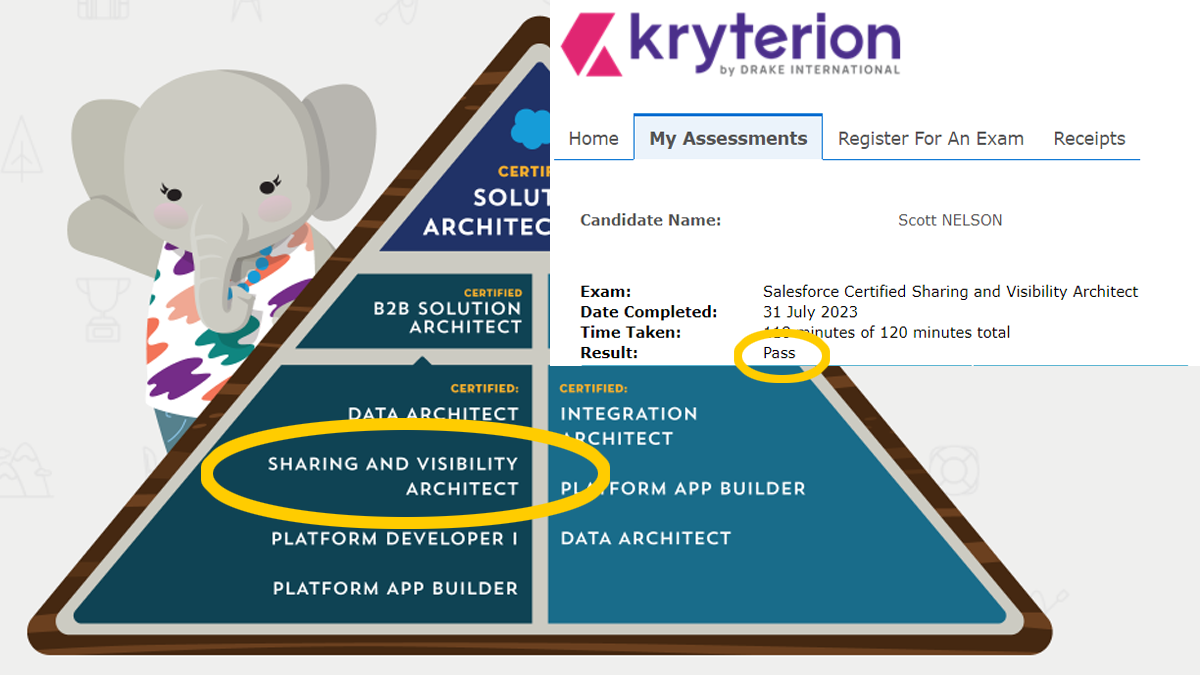

I won two vouchers this year (so far, fingers crossed) with Trailhead Quests. The first voucher was for a $200 exam and the second was for a $400 exam. My certification path is focused on Technical Architect and I had done all of the $200 exams, so I sat on that voucher for awhile. When I won the $400 voucher I was a bit surprised to find that it had a shorter expiration period. I immediately scheduled my exam for the expiration date and plowed into My Sharing and Visibility Architect Path.

I rested my brain for a couple of days and decided to use the first $200 voucher on the Service Cloud Consultant certification (sometimes called just Service Cloud Certification). For the Sharing and Visibility Architect exam I tried a few Udemy practice exams because they have served me well for previous exams. I requested refunds for all but one, and that is because I had been to busy to start on the first one and the guarantee period had expired. They were awful. I then went to the Trailhead Community and asked folks there for a recommendation and discovered Focus on Force. I will keep looking for study courses on Udemy, but for Salesforce practice exams, Focus on Force will be my go-to from now one.

My process was to first go through all of the Topic Exams and then start on the Question Bank. Then I had some issues with Question Bank on mobile, so I did practice exams on mobile and Question Bank on PC. Once completing the first 20 Question Bank exam, I found I needed to focus in these exam areas:

This is one of the reasons I don’t consider certifications a true test of consulting skill. I have delivered well-received proposals and solutions using Knowledge Management, and am regularly approached for my solution design expertise. The exam questions cover some narrow areas of very broad topics, and the questions I missed are are about activities that are generally one-and-done… then forgotten and looked up again when next needed. But, certification is important in the Salesforce landscape, so I spent time drilling on things that I would still have to look up again in a couple of years.

I went through the Udemy course in parallel, partly because I only had 55 days to prepare and a demanding day job, and partly to see if this approach was better than first doing the course and then using the practice exams.

Where previously I found the feature to check questions individually instead of at the end of the exam useful, this time I found that I did better if I waited until the end. I think this has a lot to do with my not knowing as many answers as the start as I had for the Sharing and Visibility exam (which I found surprising in itself) and that my expectations changed as I saw immediately that I was wrong. Unless you have an eidetic memory your frame of mind can impact your score more than the knowledge you have accumulated.

The Focus Review feature has the same issue as the Question Bank when used in Chrome on Android mobile devices. The score calculation at the end fails to complete. It then remembers the answer state the next time either is tried. Because both use random questions, some will have the answers from the previous session. I reported this twice for Question Bank and once for Focus Review and no fix has happened yet. If you run into the issue, please report it and then stick to using a PC for those test types. The answers from the failed mobile session will still be there the first time but subsequent attempts will work properly so long as you don’t try mobile again like I did (sometimes I’m optimistic when I shouldn’t be).

I use https://10015.io/tools/bionic-reading-converter to format my notes for Bionic Reading®. Below are the ones I made to review just before the exam. They are specific to reminders I thought would be useful as I created the notes and I recommend you create your own, or supplement these with your own.

For the Industry Knowledge questions, when not sure always go with the one with the highest cost savings followed by the one with the most potential income result. Again, this is only when unsure. There are some questions where cost is not the key factor of the question, for example when considering the benefits of an email channel, lower cost may not be the correct answer as there are other options that are a lower cost than email.

For processes, Case Stages are driven by the Case Status field

CTI allows telephony services in Salesforce. No desktop software or softphone required.

Customer SLA = Entitlement

List views are automatically created for queues

Customer Service site template for Questions to Case, not Customer Portal

Console History component shows recent primary and sub tabs. Recent items shows records

Email to case has a limit of 2500 per day

Knowledge does not return solutions only articles that are related to similar cases or questions

Messaging is what was called Live Messaging and not related to Social

Enable Case Comment Notification to Contacts is a support setting

There is no case field alert

Email approvals require Draft emails

Service Console requires Service Cloud User license

Knowledge Publication Teams and Publication States do not exist

In the routing model, you choose whether to push work to agents who are Least Active or Most Available. If you select Least Active, then Omni-Channel routes incoming work items to the agent with the least amount of open work. If you select Most Available, then Omni-Channel routes incoming work items to the agent with the greatest difference between work item capacity and open work items.

Internal metrics focus on what happens inside the contact center, and external metrics focus on what happens outside the contact center.

Case Sharing Rules by Record Owner:

Public Groups

Roles

Roles and Subordinates

Full disclosure: This is a quick draft from notes made during my prep journey and quickly edited after passing. Based on comments received, I may revise and elaborate further (hint, hint)

After completing the Administrator and Developer certifications, the App Builder certification seemed easy, and I had an expectation they would continue to get easier. I was right and wrong.

I struggled a bit with this certification, for a variety of reasons. First, the earlier certifications are very popular as the best of the entry-level exams. Popularity in this century leads to quantity, and there was lots of high-quality study material available for free and at a reasonable cost. I used to find the Salesforce sharing and visibility topics a bit confusing. They are highly flexible, and flexibility can lead to complexity. The thing about complexity is that when it is well-managed it has a simple core. Getting to that core is the challenge with understanding the subject areas of sharing and visibility and preparing for the certification exam.

For most of my earlier certifications I started with digging deep into the material and the using practice exams to help identify my weaknesses. There are not a lot of courses for sharing and visibility, and many that are out there are out of date. I think part of this has to do with the diminishing number of test takers for this one, coupled with the complexity of the material. Higher effort to address a smaller market reduces those interested in completing. I did find a decent subject matter course on Udemy, my usual go-to for learning anything quick at reasonable price (so long as I can wait for one of the frequent sales). I also found a good, exam-focused series on YouTube that I highly recommend for those like me who want multiple sources and frequently treat YouTube videos as pod casts, using audio-only.

Of course, I also did all of the related modules and trails on Trailhead. There were fewer of these for sharing and visibility compared to my previous certification, too. I also found them less effective in making the content stick in my head.

Where I struggled was finding practice exams. Most of the ones on Udemy for sharing and visibility are garbage (sorry, Udemy…and I’m a stock-holder, too). One is not too bad, though I think I give it some leniency because of the comparison to what else is available. I finally got frustrated and posted on Trailhead (where I am guilty of answering more questions than I ask, a poor learning strategy). The community did not let me down and came back with a solid recommendation for focusonforce. Their format is a bit different, in that they have practice exams, and they also have section-focused exams. I missed the section-focused being separate from the practice exams until the last minute. I would have felt much more prepared had I found them earlier. They also have a nice feature of 20 random questions that are mixed in proportion to the exam topic mix, which was great for when you don’t have a lot of time and still want to practice.

Oh, yeah, another cool feature from focusonforce is the ability to see the answer after each question instead of at the end. I know there are some free site on other topics that do this, but this is the first time I have seen it with a high-volume and high-quality set of practice exams. It made it easier to make notes on my weaker areas. With better notes, I then used Bionic Reading® forming to make it easier to read them over and over again.

No matter what exam you are preparing for or where you get the practice exams, I recommend taking the practice exams using multiple paces; fast, slow, checking each, checking at the end.

One of the reasons I was so dissatisfied with the Udemy practice exams is that the questions are so long and complex, yet it is still 60 questions each. Well, turns out most of the questions really are long and complex. Still, the Udemy ones missed the actual style of the real questions. Understandable, given the level of complexity, but still disappointing.

Take the practice exams using multiple paces; fast, slow, checking each, checking at the end. When doing the real thing, follow standard practice of speed through and mark for review, etc.. The value of practice exams is more than learning the answers to likely questions. The highest value is in adopting the mindset and thought processes in the context of how exam questions are stated and rated, which is more complex and more constrictive than a typical design session where one can review the problem repeatedly over time and adjust

Below is a list of resources I used. I hope they help you in your own pursuit.

The course I took was Salesforce Certified Sharing and Visibility Architect Course by Walid El Horr

The practice exam that was OK on Udemy is Salesforce Sharing and Visibility Practise Tests – 100% PASS (that is the title, not an endorsement by me).

Salesforce Sharing and Visibility Architect Practice Quiz and Sample Questions

Sharing and Visibility – Salesforce Certification by CertCafe

Identity and Access Management in Salesforce by Salesforce Apex Hours

Combined playlist on my channel

Generated with https://10015.io/tools/bionic-reading-converter

runAs() is only for test classes

runAs() does not enforce user and system permissions

runAs() does not enforce FLS

Tagging rules have only three options:

1. Restrict users to pre-defined tags

2. Allow any tag

3. Suggest tags

There is no View Content permission

The Salesforce CRM Content User is a Feature License enabled at the User Level (not Profile)

Granular locking is default

Granular locking processes multiple operations simultaneously

Parallel recalculation runs asynchronously and in parallel thus speeding up the process. Creating sharing rules or updating OWD must wait until the recalculation is complete

Initialize test data and variables before the startTest method in a test class

There is NO Account Team Access

Team Member Access is how to view access.

While the permission is Edit, the Apex method is isUpdateble()

While the FLS column is View, the API method is isAccessible()

If want to see group access, look in group maintenance table, not sharing setting for object.

User above a role in the hierarchy can edit opportunity teams of users in subordinate roles

File types cannot be restricted by the library

Opportunities have a Transfer Record permission

Experience Cloud uses Sharing Sets

Sharing rules cannot set base object access

PK chunking to split bulk api queries for large data sets

Rapid access usually means a custom list view

A library with more than 5k files cannot have a folder added

Sharing set in Experience Cloud allows access only to account and contact records.

Share groups are only for HVP users

Schema.Describe.SObject/Field result for permissions

Session based permission set group is more efficient than multiple session based permission sets

There is no Partner Community Plus

Sharing sets can be assigned to profiles

Criteria based sharing rules are only for field value criterion. If no field value criteria, use ownership based sharing rules

Max file size for UI upload is 2GB

EPIM = Enhanced Personal Information Management

Delegated external administrators can’t see custom fields on user detail records

Sharing Hierarchy button is a thing that shows the hierarchy

Share Groups are not available for Partner Community Users

If the default OWD access is changed for an object, it is no longer controlled by parent

There is no Permission Object

Sharing Rules share to groups and profiles, not individuals

Enhance Transaction Security Policy can be triggered by request time length

If only one custom record type is assigned to a user that is the default type for that user.

Territories can belong to public groups

Activities are child objects of any of the following parents: Account, Opportunity, Case, Campaign, Asset and custom objects with Allow Activities.

the ‘with sharing’ and ‘without sharing’ keywords can be declared at the class level, but not at the method level.

The Group Maintenance tables store Inherited and Implicit grants, i.e., the extrapolated grants, which makes sense as extrapolation is more compute-intense than a query.

Partner Community can use Sharing Rules

External OWD must be equal or more restrictive than the Internal OWD

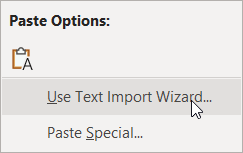

The upside to modern PCs is that so many file associations are created for us automatically. This is offset to a degree because the default setting in Windows is to hide file extensions, so that what you see is just the name and an icon. This is especially problematic for CSV files as most people who use Windows have Excel and since the friendly green icon is fronting the file the habit to just click it prevails.

Sometimes, this association is fine. Simple text data delimited with commas or tabs can be converted to Excel format with no manual intervention and everything looks fine. But, if any of the fields have commas, or are dates or numbers, than Excel makes lots of assumptions that it doesn’t tell you about and changes the data to match the assumptions. One does not need to be a data scientist to know this is bad. Indeed, one only needs a pulse to be annoyed by it, and if you don’t know why the data is being messed up, frustration is a common reaction.

The first thing I recommend is to change your Window settings to show file extensions. There are instructions provided by Microsoft for this here.

Next, develop the habit of opening CSV files using one of the more time-consuming (and reliable) methods. Method 1 is to open the CSV file in a text editor (my personal preference is EditPad, and there is a good list of others here). Then create a new Excel workbook or sheet, copy the contents of the CSV file from the text editor and use the Paste > Use Text Import Wizard option.

The simplest approach is to accept the defaults on the first two steps and on the third step select all columns by holding the Shift key, scrolling to the right and click the last column, and choose the Text column format.

This will create a clean separation by column with no auto-formatting applied. Then Finish and you will have the data as you expected.

For larger files, I suggest reading this thread on SuperUser.com.

When creating data in Excel for CSV upload, format all the columns as Text before saving as CSV. If you have to do the same data transforms regularly, I recommend creating a template with formulas.

Probably the hardest habit of all for most users to adopt is when opening the file in Excel just to view its contents is to select No when prompted to save the changes.

Developer shortages, shareholder demands, and digital-first competitors are a combination that fuels the most recent popularity boost of low-code/no-code (LCNC) solutions to business application needs. LCNC platforms are now widely used for custom software solutions and business transformation across industries such as healthcare, banking, finance, insurance, manufacturing, and retail. The idea is not all that new. I remember building some feature-rich functionality using MS Works back before we had Google to search for shortcuts on and AOL was mailing floppy disks every week to the same addresses.

The tools have been getting better and better over time, with a major acceleration in quality as developers began using drag-and-drop to quickly outline complex applications that could then be tweaked for robustness and complexity. These platforms now leverage visual interfaces and drag and drop interfaces, along with pre built components, to enable rapid application development and significantly shorten project timelines. Business spent tons of money on tools to make developers more efficient, so the tools kept getting better until they evolved into something that non-developers could use to create fairly sophisticated functionality (and the developers went back to the command line). Some of the leaders in the space are the Salesforce.com platform, Microsoft Power Apps, and Appian, to name a few.

LCNC democratizes application development by empowering business users, non technical users, and citizen developers to create applications quickly and easily, while professional developers and traditional developers can focus on more complex tasks. The adoption of low-code/no-code platforms is projected to grow significantly, with estimates suggesting they will be used in over 65% of application developments by 2026, driven by the need for agile delivery and faster development cycles.

It’s easy to see why business buyers are attracted to LCNC options. LCNC tools enable employees to quickly develop applications and create applications tailored to their business ideas and departmental workflows. These platforms empower employees in various departments to build tools that fit their specific needs, making it easier to innovate and respond to changing business requirements. Common internal use cases for LCNC platforms include vacation request trackers, employee onboarding portals, and project management dashboards. Developer shortage? No problem— your knowledge workers can drag-and-drop their own tools together. Need to improve margins? Tell your developers to always use clicks over code and reduce the time to deliver functionality. It is definitely that easy, because you saw a YouTube video where someone built an app in 10 minutes or a live demonstration at a conference where the presenter solved a problem in 20 minutes that your developers took six months to complete two years ago. You might still need developers for the really complex stuff, but now they can do more, faster, with fewer people, and why do you need architects anymore?

Now, in a quickly written post I might just say that the entire previous paragraph is describing something that only exists with smoke and mirrors and is mostly vaporware, and that you are doomed to difficult, time-consuming solutions. I’m not going to say that, but I hope you haven’t gotten rid of those architects yet, because they can help make the right decisions about clicks over code.

Hopefully you have heard the expression “there is no cloud; it is just someone else’s computer.” Well, in that same pattern, there is no LCNC; it is just someone else’s code. The great thing about this is that when there is a problem with the code, or the code can add more features, you rarely need to make any changes to get those updates. However, there are always updates, because the people using LCNC always seem to find the limits of imagination applied to the underlying code, and then you can’t go any further until it is updated. And if there aren’t enough people sharing the same problem, that might be a long wait. Thorough testing will help find these limits and let you know if you need to go another way. Custom development and traditional software development remain essential for businesses seeking unique functionalities, competitive advantages, and long-term scalability, especially when highly unique or complex functionality is required. While LCNC platforms are powerful, they may struggle with highly complex algorithms or extreme customization, which may require traditional ‘pro-code’ development.

Those slick demos on YouTube? Not all, but many, have been edited for time and flow. I watched a video about writing CI/CD configuration files recently and was both impressed and amused that the person kept using the wrong term and added a correction notice on the screen each time, at one point including “I don’t know why I kept saying the wrong thing.” This honesty was refreshing, and it bleeds over to the live demos, where clearly they aren’t editing out extra time or typical mistakes that result in doing something over. Live demos are the result of multiple rehearsals and (usually) careful selection of use cases that seem complex when seen for the first time, because the audience’s experience with that challenge is how hard it has been in the past, and they don’t consider the demonstration is of a tool that has been purposely designed to solve that issue. As Arthur C. Clarke said, “Any sufficiently advanced technology is indistinguishable from magic.”

That said, for the right use cases, LCNC will be easier and faster. The most important thing to remember about LCNC platforms is that they continuously mature. Just because it was not up to the task two versions ago doesn’t mean that it won’t fit the bill perfectly now. Take time to review the latest capabilities for any solution rather than falling back to an earlier approach. Conversely, don’t take LCNC claims at face value. It may not address your specific use case, or it may be an immature solution that will become unavailable in the next release.

While LCNC platforms empower citizen developers, they can also introduce risks such as shadow IT if not properly managed. Organizations should implement compliance and governance protocols when adopting low-code/no-code platforms to ensure best practices. Additionally, low-code/no-code platforms can significantly reduce development time and costs by minimizing the need for specialized development talent and reducing IT bottlenecks.

Finally, as you leverage LCNC for application development and automate business processes, it is crucial to prioritize documentation and monitoring to ensure that business processes are properly managed and maintained over time.

“Act in haste, repent at leisure,” is both an incorrectly stated idiom and perfect description of why LCNC is not “eating the world” as software itself did. The bad rep of citizen development and shadow IT isn’t as much about solutions not being controlled by IT as it is about there being no organization of the solutions. Redundancies and inefficiencies always occur when there is too much reliance on LCNC, and these are the least bothersome of the side effects. More of a concern, and less obvious (until it is too late), is the lack of testing in non-production sandboxes. Automation is a key target for LCNC, and while it can make doing tedious tasks much faster while reducing the effort, it can also create havoc much faster with less visibility into the root cause.

Another ill side effect of LCNC is the attitude that since it is very clear how implementation is put together at the time, it will be just as clear to anyone else at any future time. This is usually an illusion (or delusion, depending on perspective). I know senior developers who, when rushed to deliver code without documentation, can look at something they wrote only a few days ago and have to laboriously trace everything back to remember why they wrote it. Now take something that someone with little software experience threw together (literally or figuratively) that did something really useful that people began to depend on, and then the person who built it leaves the company. Sooner or later, that solution will have an issue, and the odds are very much against someone finding where it is running, how it is working, and what needs to be fixed. Everything grinds to a halt until it is recreated from scratch. Even worse is when the orphaned solution clashes with another and very automatically creates havoc with the data in production.

This is not to say these tools and solutions aren’t valuable. They just need some supervision, documentation, and monitoring to ensure they are assets rather than liabilities.

This particular area is similar to the previous one, but a little different in how to prevent it from being a problem. Doing one simple thing with declarative programming (another type of LNCN) is very powerful in that saves time and decreases maintenance. So, doing many things, all strung together, is even better, right? Well, sometimes. Some processes require a lot of steps, and when initially building them out, where that is the only focus of the person doing the work, it all seems perfectly clear. But later, if there needs to be an update or an unforeseen problem arises, it can become extremely hard to trace through dozens (or more) configurations on a palette or file to locate where to make the change or fix the issue.

The solution to complexity from too many simple things strung together is to break the steps up into logical units. “Logical” may be in the mind of the developer, which is where architects come in. The steps can be categorized and segmented and then used (and re-used) in a simpler configuration. Sometimes steps are complex enough that it is better to have some custom code that can then be used by the configuration to complete certain pieces. Decomposition is something not often thought of with LCNC solutions, and it’s what makes the difference between great solutions and great re-writes following great disasters.

Well, like some of the shortcuts in the use of LCNC, this last item is really to round the list out to five. I think it is clear from the first four considerations that while consequences can sometimes be seen coming, and are always clear in hindsight, that a formulaic decision when to use LCNC and when to call in the senior developers and architects just isn’t possible.

There are, however, some safety rails that can help steer things in the right direction. First are enterprise architecture principles that reflect the organization’s culture around such solutions. Some businesses are built around a coding culture and LCNC needs to be isolated to specific usage. Other businesses are dependent on software to run but have a very small IT group where code is not their thing.

The reason I used the plural principles is that the best option will vary by context. A solution on the Salesforce.com platform should be declarative over custom … except when it is impractical. For example, one could probably manage very complex message routing or data synchronization using Flows, but Apex code will be much easier to maintain for the bulk of the solution. Weaving together declarative automation with custom code is often the most elegant solution to something complex, business critical, and not yet a full-fledged feature (or part of what differentiates your business). And check back each time you update it or need something that is new and similar to see if the Salesforce offering hasn’t since come up with an answer that will require less maintenance on your part. Missing the need to change the solution when the requirement changes is not limited to LCNC, though it is identified more quickly by the nature of LCNC being designed and built “top down” where custom code grows “bottom up”, delaying and obscuring the accumulation of technical debt.

Click, drag, and drop is what moved computers from the basement to the backpack, and even though “Internet speed” is a 20+-year-old term that now has different capitalization and meaning, we continue to look for ways to do more, faster, and easier. Low- and no-code is one way we continue to pick up the pace. We just need to remember to check that our laces are tied and we have the right shoes for the right track before really pouring it on to reduce the possibility of a face-plant at the finish line.

Originally published at https://logic2020.com/insight/low-code-no-code-considerations/