TL;DR: Traffic researchers discovered that adding more road often makes congestion worse, not better. Most AI rollouts are doing exactly that. The fix is the similar to what highway departments figured out decades ago: change behavior first, then worry about capacity.

I have spent more hours of my life commuting than I care to remember, and I have mixed feelings about how it (this is not about WFH vs RTO, which I also have some ambivalence about). OTOH, I can always think of things that would feel more productive. OTOH, the mental autopilot leaves room for solutions that eluded me during the working day. It is also a good time to contemplate paradigm shifts as they play out: from paper to digital, from MVC to SOA, from on-premise to cloud, and now everything(?) to AI.

The transitions that stick share a pattern. Not a hype arc. A pressure arc. The system resists, adapts, then acts like it was always this way. Different technology, same dynamics.

A lot like how people behave on the highway.

Deliberate Slowing to Speed Things Up

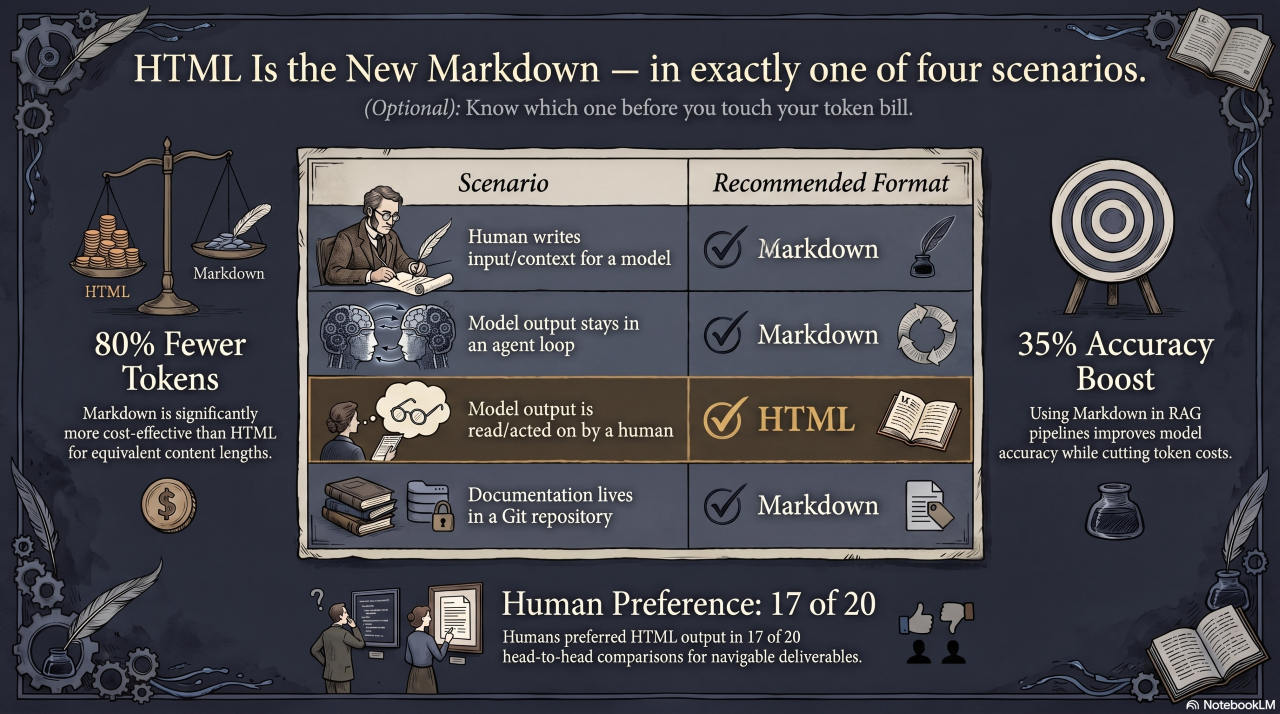

Transportation researchers spent years collecting data on traffic flows, tracking volumes before and after road expansions, mapping where congestion formed and how fast it returned. When Gilles Duranton and Matthew Turner analyzed the numbers across US cities, what they found ran counter to the prevailing assumption. A one percent increase in highway capacity produced almost exactly a one percent increase in driving (among other things). Add a lane, and within a few years congestion is back where it started, sometimes worse. They named it The Fundamental Law of Road Congestion. The instinct to build more road was not just ineffective. It was making the problem worse.

Separate research produced an equally counterintuitive result. In 2008, Yuki Sugiyama and colleagues put 22 cars on a circular track and told everyone to hold a steady speed. No merges, no accidents, no bottleneck. Yet above a certain density, a jam appeared out of nowhere and rippled backward through the pack. One driver braked slightly, the car behind overcorrected, and the wave propagated. A traffic jam with no external cause. The fix was not more road. It was more deliberate driving: leave a gap, anticipate, resist the urge to overcorrect.

These findings changed practice. Highway departments that once defaulted to expansion started investing in variable speed limits, ramp metering, and traffic calming measures. Smaller interventions, aimed at behavior rather than capacity, moved more cars through at lower cost. The road mattered less than how people used it.

Same Jam, Different Road

The parallel is direct, and I have watched it play out from both sides of the table. MIT’s Project NANDA found that after roughly $30 to $40 billion in enterprise AI spending, about 95 percent of organizations saw no measurable impact on the bottom line, with only around 5 percent of pilots producing real revenue. That is not a rounding error. It is the Fundamental Law of Road Congestion applied to a software budget: thirty to forty billion dollars worth of new lanes, and most of the cars are barely moving (or heading in the wrong direction).

The organizations stalling out follow a common pattern: tools deployed before workflows get redesigned; licenses purchased before anyone has defined what problem they are solving; metrics devised to measure what was done over what is possible. (That last reminding me of my favorite quote of contested origin.) When results disappoint, the leadership of organizations struggling with AI adoption initiatives either pull back everything or double down with a broader mandate and no clearer strategy. Both reactions make the jam worse. Adding capacity without fixing the underlying process is the detour. The congestion moves, but it does not clear.

The phantom jam dynamic shows up here too, and it spreads faster than any highway bottleneck. One over-tasked leader reads a discouraging headline, taps the brakes, and suddenly the whole initiative is under review. Or a competitor ships something flashy and someone stomps on the gas with a company-wide mandate before anyone is ready or knows where to go. The density of anxiety crosses a threshold, and the shockwave does the rest. Nothing structural changed. Behavior caused the jam, and only behavior can smooth it.

Where the Congestion Actually Forms

The real bottlenecks in AI adoption are rarely where struggling enterprise leadership looks for them. The tool is not usually the problem. The problem is everything around the tool: unclear ownership, undefined success criteria, and a workforce that was handed a license with no guidance on what problem it was supposed to solve. I have seen teams buy Copilot seats for every developer in the org and then measure success by activation rate. They got activation. They did not get output. Those are not the same thing, and conflating them is how you burn a year and come back to the next planning cycle with nothing to show for it.

There is also a shadow traffic problem that nobody talks about enough. When the official AI rollout is too slow, too restricted, or too vague, people route around it. They use personal ChatGPT accounts. They paste sensitive data into consumer tools. They build their own prompts in the gaps the IT department did not anticipate. This is not rebellion. It is adaptation. It is what happens when capable people hit a congestion point and look for the on-ramp the original road designers missed. The workaround is a signal, not a discipline problem. Ignoring it does not make it stop. It just makes it invisible.

Governance is the infrastructure that never gets funded until something goes wrong. Who is accountable when the model is confidently wrong? What happens to the output when the underlying model changes? Which data is allowed in, and which is not? These are not legal abstractions. They are the guardrails that let the rest of the system move faster. Organizations that build them early spend less time recovering from incidents and more time compounding on the investment. Skipping governance to move faster is the merge lane strategy. It feels efficient right up until everyone is stopped.

The Mandate That Made It Worse

Everett Rogers mapped how innovations spread through a population decades before generative AI existed: early adopters first, then the majority, then the laggards. The laggards are rarely the problem. They are often waiting for the road to be built around the tool, clear governance, reliable data, a documented sense of who is accountable when something goes sideways. Mandating faster adoption without building that infrastructure does not accelerate the curve. It creates congestion earlier in the journey, and the shockwave from that early jam takes longer to resolve than the time you thought you were saving.

Organizations that lead with training and strategic framing before deployment consistently outperform those that lead with usage mandates. When people understand what a tool is for, what it does well, and where it falls short, they use it in ways that compound over time. When they are handed a tool and told to use it more, they find ways to hit the metric without changing how they actually work. Activity goes up. Value does not follow.

Incentives tied to outcomes, paired with genuine investment in skills and strategy, produce something different: people who understand where they are going and why the tool helps them get there.

Getting Somewhere

Better roads move more people than bigger roads. The organizations getting real returns from AI are not the ones with the most licenses. They are the ones with the clearest processes, the best-trained people, and a strategy that connects the tool to an actual destination.

Define what success looks like before you deploy. Name the problem before you buy the solution. That clarity reduces friction for everyone involved, and it makes the detours worth something when they happen, because the detours will happen. The unexpected use case, the team that figured out something no roadmap would have suggested, the finding that reframes the whole initiative: those are not failures of planning. They are what happens when capable people have good tools and room to move. The goal is not to prevent detours. It is to be in good enough shape to recognize a promising one when it appears, rather than sitting too stuck to turn.

The lane was never the problem.

(Next up: the joys of reading on public transportation.)

© Scott S. Nelson