One of my rare posts on my employer’s site: Taming Complex Cloud Integrations with Informatica Cloud | Primitive Logic

© Scott S. Nelson

One of my rare posts on my employer’s site: Taming Complex Cloud Integrations with Informatica Cloud | Primitive Logic

Ran into this recently and wanted to share it with my loyal reader… An Informatica Cloud Application Integration solution that worked wonderfully through months of UAT and flawlessly the first few weeks in production suddenly produced complaints from stake holders because the files output as the end result of the process were empty.

Looking at the process logs, there were no errors shown. Every technologist’s favorite finding (sarcasm, for those not blooded in debugging production issues).

The application functionality is relatively simple: Listen for new files in a remote folder via SFTP, download the files and rename them for processing by a Data Integration Mapping Configuration Task then run the MCT. I’ll spare you the details of trying to get the details of the missing files except that once they were obtained the pattern I noticed was that the missing files were the largest in the brief history of the application. Not much more deduction required to figure out that in the source environment the files were being written directly to the outgoing folder rather than being created elsewhere and dropped in when complete. The larger files took longer to write than the polling interval so they were being brought down in name only (pun intended).

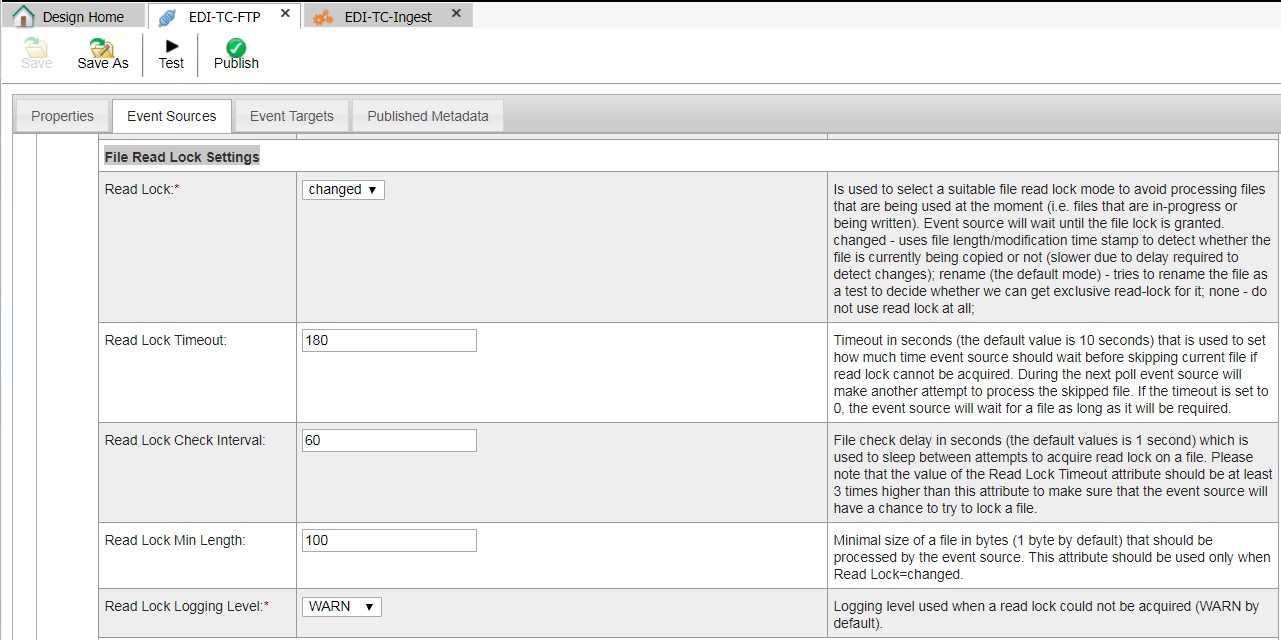

It took a while of reading and re-reading the documentation for the FTP connector to determine that the best strategy for this issue was to use the File Read Lock Settings for the event source, setting the Read Lock value to “changed” and running tests to the get the correct values for the other File Read Lock Settings.

Problem solved!

I sometimes find a need to manage a timestamp with ICRT and prefer not to add a database to the mix unless necessary. This process will set or get a timestamp from a Linux server and can easily be modified for Windows if necessary.

Informatica introduced their cloud initiative back in 2006. It has grown to encompass many data-related services including cleansing, EDI, MDM, etc. To set the context of this writing, by “Informatica Cloud” it is meant to include only the separate-but-related application- and data-centric aspects, sometimes referred to as the Informatica Cloud Integration Platform as a Service (iPaaS). iPaaS includes the Cloud Data Integration functionality based on (and separate from) their flagship Power Center ETL application and the Cloud Application Integration based on (and distinct from) the ActiveVOS platform that Informatica acquired back in 2013.

To save bytes, Informatica Cloud Data Integration will be referred to as ICS, and Informatica Cloud Application Integration (a.k.a, Real Time Integration) as ICRT.

Given the background, it is not very surprising that ICS and ICRT are mostly used separately for their key purposes. If there is some data that needs to move from system A to system B, ICS is the tool, and if a workflow needs to happen in real time, ICRT is the way to go. Both of these are valid assumptions, and the fact that ICRT is an additional cost to the default ICS included with iPaaS strengthens this viewpoint.

ICS provides a robust API for managing objects and running tasks. There is a connector in ICRT that provides wizard-driven access to the ICS API. ICRT processes can be exposed as web services that provide both a SOAP and ReST interface. In short, despite their distinct natures, ICS and ICRT can be easily integrated out-of-the-box (or, out-of-the-cloud, in this case).

Informatica provides ICS connectors for many third-party systems that are frequently integrated through ICS, such as SAP, Workday and Salesforce, in addition to common protocol connectors like SOAP, ReST and JDBC. In theory, there are very few systems that cannot be integrated in an ETL-manner using ICS, and this is also true in practice. That said, “able to” and “easy to” are important factors to consider when planning an integration project within delivery scope and maintenance goals.

Most of the connectors for ICS are also exposed in ICRT when enabled or installed. ICRT has a very robust architecture for creating Service Connectors to SOAP and ReST services that can be used by Processes that can in turn (as mentioned earlier) be exposed as SOAP or ReST services.

Not all web services are created equally. Where some provide a straight-forward interface to elicit data in a format ready for inter-platform translation, most are intended for look ups and transactions rather than being a source for batch-loading data. Informatica provides some connectors that wrangle popular APIs, such as Salesforce.com, into a structure that is easy to work with. Other services may have a connector that is more suited to being an ETL target, or meant more for the “citizen developer” to be able to load data into reporting format. Informatica also provides standard ReST and Web Service adapters, but if the API response is several layers deep it can be complex and confusing getting at the values using a graphical design platform such as ICS.

Fortunately, ICRT provides a way to quickly create a Service Connector for any standard API. The Service Connector provides a wizard to turn the API response into an object that can then be streamlined and simplified for easy management in an ICRT processes. The ICRT process can perform further transformations, such as renaming fields and formatting data types, or simply act as a pass-through for outputting the more digestible response format.

Once the ICRT process is connected to the Service Connector, you have the option of beginning your integration in either ICRT or ICS, depending on the nature of the integration. For example, if there is a great deal of processing to be done in ICRT before the data is ready for ICS, it is simpler to initiate the process in ICRT, output the service response to a disk location, and then call ICS to perform the ETL steps with the file as a source. Alternatively, when the response is quick or ICRT is only acting as a proxy to simplify the response, the ICRT process can be exposed as a service and that service called by ICS as the ETL integration source.

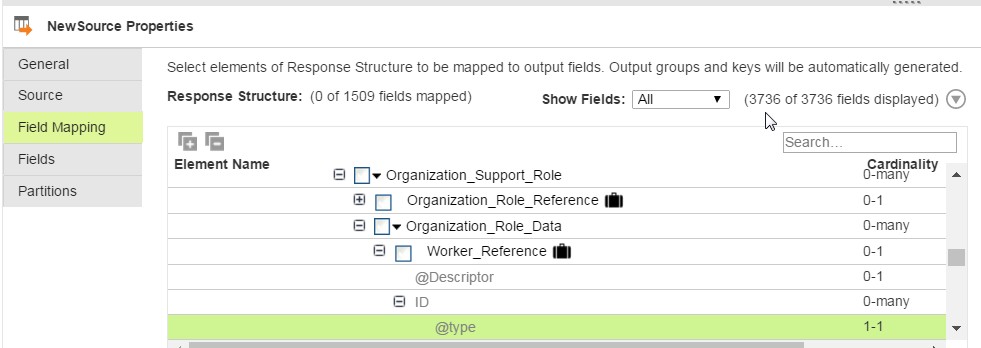

Here is a real-world example of where this approach is useful. Informatica provides a perfectly functional connector to Workday. The connector provides full access to the Workday APIs. The Workday APIs, however, are not very simple to use. They provide a some control over the response format, but anything beyond limited data in common fields is deeply nested within complex objects. Note in the image below the number of fields available:

Using an ICRT Service Connector, we can take this complex response and immediately simplify it:

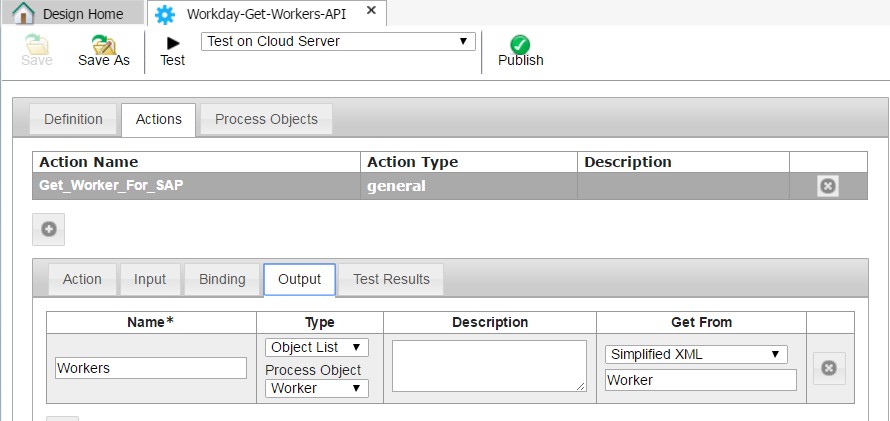

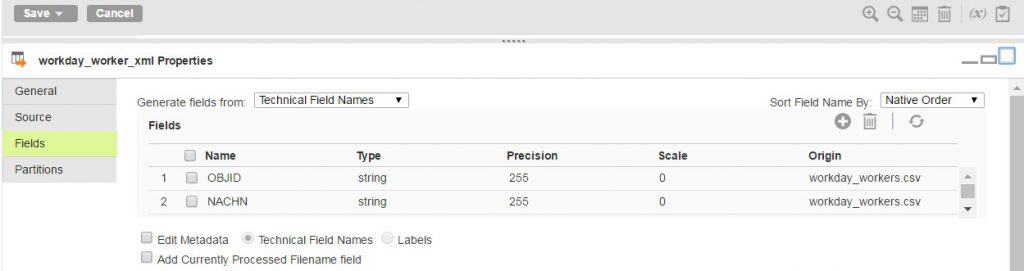

The Service Connector above can be run by an ICRT Process that will map the fields to a process object with the same names as the target system fields (SAP in this example) and then provides them directly to the mapping as a flat data set:

Granted, the mapping could still have been accomplished without the use of ICRT. By introducing ICRT as a proxy to the web service, development can be done faster by parsing simple XML rather than traversing complex nested objects. With the field names being defined in ICRT, if it is necessary to redefine the field sources there is no need to trace back through transformations in a Mapping to locate what may have been impacted.

Only one instance of a Mappings task can be running at a time. To avoid the error “The Mapping Configuration task failed to run. Another instance of the task is currently running”, use a unique Mapping Configuration Task per process. In the case of Data Synchronization Tasks, many of the same tasks can be performed by a mapping, which can have multiple Mapping Configuration Tasks calling.

In most cases Informatica Cloud Data Integration functionality is all that is needed and desired to integrate data between systems. In some cases where web services are the source and the format of the service response is nested and complex, using Informatica Cloud Application Integration as a proxy service to simplify the response to just the fields needed for transformation can be a time saver both in the creation of the integration and its future maintenance.

If you are just learning to parse XML with ICRT, this thread has a very detailed example of a finding a node by a value formula.

Source: Help Parsing XML node in Formula Editor in ICRT