It depends.

Yeah, I hate that answer, too, but it’s because we all prefer simple answers to the real ones. We also want to believe in overnight success, one size fits all, and that the plug and play option is all we need. And don’t get me started on long term weather predictions!

But it helps to know what it depends on, which is, in this case, where you are in your AI journey. The same approach really applies to any learning journey where there are multiple aspects, so we’re going to start with looking at it simply from the perspective of learning.

Why and How and When to Start Deep

If this is your first foray into a new realm of knowledge, start by going deep on one aspect.

Pick the area that you are most interested in. Intrinsic motivation is a better driver for learning than any reason that includes “have to”. Once you have picked that topic, dig in and follow your curiosity until you feel you can converse freely on the topic. This is how you build a baseline mental model.

Going deep in one specific corner makes other adjacent areas easier to absorb later because you actually have a frame of reference to hang new information on. When you encounter a new tool, you filter it through existing mental models to facilitate integration of new knowledge. This cognitive filtering means you aren’t starting from scratch every time a model updates. You are simply updating a specific branch of an existing tree. (See The Memory Paradox: Why Our Brains Need Knowledge in an Age of AI)

The Pivot to Breadth: Mapping the Landscape

Once that baseline mental model exists, going broad is more valuable.

This first accumulation of breadth is to understand what’s possible, or available. You aren’t trying to master everything. You’re mapping the space so you know where the boundaries are. This aligns with the “T-shaped professional” model, defined by having deep expertise in a specific area while also possessing broad knowledge across various disciplines. This structure ensures you have enough technical depth to contribute high-value work immediately. It also gives you enough breadth to collaborate with experts in adjacent fields without needing a translator.

Going broad makes it easier to know exactly when and where to go deep next. Knowing what exists and what is possible makes it easier to say “I have an idea of how that can be done” with conviction.

The Trap of Constant Depth

The problem with going deep on one thing at a time, after the initial deep dive, is that when you need the knowledge or skill in a practical situation, there may be something adjacent that will make it easier or is better suited.

If you’re buried in a single silo, you won’t see it. This is why pure specialists struggle when their niche technology shifts. Markets move faster than individual mastery, which is why modern engineering organizations must embed specialists into existing teams. Breadth prevents you from becoming a legacy asset the moment your specific tech is disrupted. It provides the foundation for transferring implicit knowledge, which is the exact kind of knowledge needed to generate creative ways of tackling business problems. Innovation happens at the intersection of two unrelated fields.

Managing the Hierarchy of Ideas

To move between breadth and depth effectively, you have to understand how to categorize information. A practical framework to understand how to conceptualize those categories in a given realm of knowledge is The Hierarchy of Ideas. This concept allows you to mentally zoom in and out of a topic. It ensures you are always operating at the exact level of detail the current problem requires.

Think of a hierarchy using transportation as the frame. At the top, you have the abstract concept of “Transportation”, which includes planes, boats, trains, cars, skateboards, and ox carts. Moving down a level, you find “wheeled vehicles”, which is still broad enough to encompass trains and scooters. Further down, “Cars” will include internal combustion, electric, and peddle powered. As a mechanic, you will be more interested in learning the distinctions between a Ferrari and Hyundai, or between the Sonata and Kona. The higher you go, the more general and broad the idea becomes. The lower you go, the more specific and detailed it gets.

Navigating this hierarchy is done through “chunking.” When you chunk up, you move from the specific Tesla to the broader category of “Transportation” to understand the big picture. When you chunk down, you move from the general concept of “Cars” into the specific components like the “Battery Management System” to find depth. You can also chunk laterally, moving from “Cars” over to “Trains.” This allows you to find alternative solutions that exist at the exact same level of utility.

The AI Sandbox: Applying Levels and Chunks

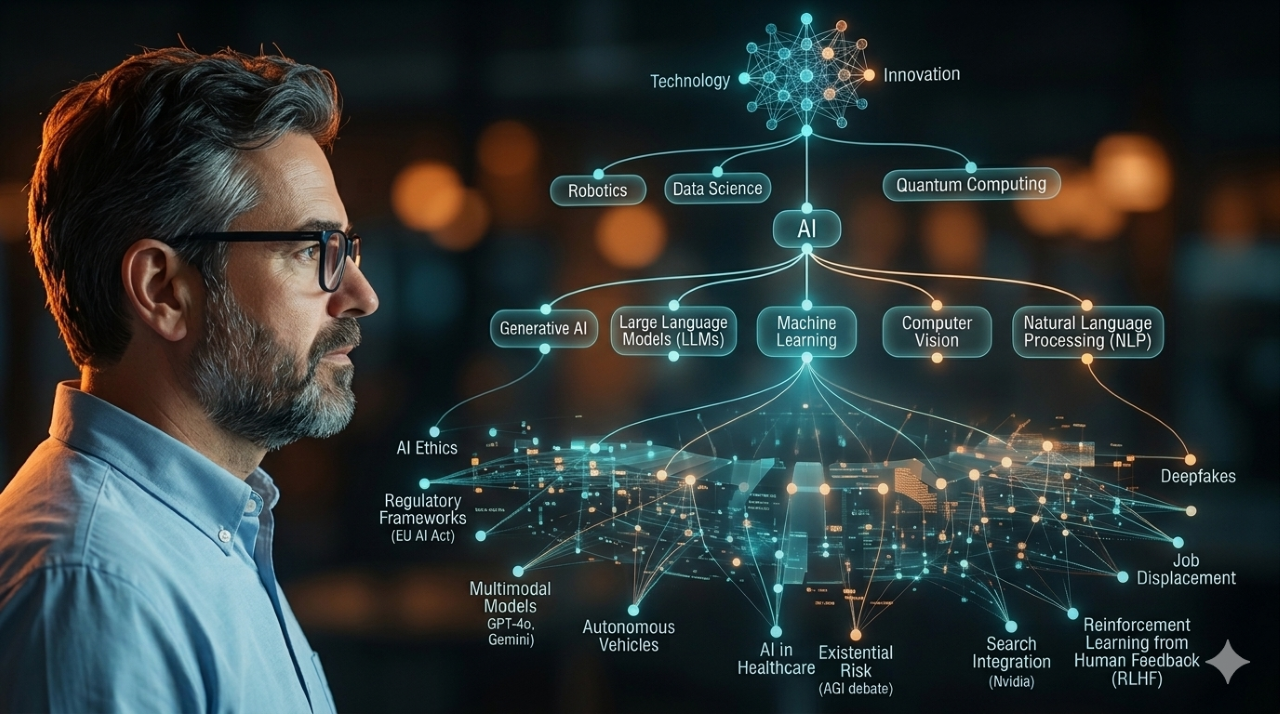

AI is like transportation in the way that zoology is like geology. They aren’t one giant subject. There’s a hierarchy of distinct concepts, applications, audiences, and values that you have to navigate intentionally.

Start by chunking down into a specific primary aspect. Dive into development. If you choose Software Development, don’t just use a generic chatbot. Master how tools integrate directly into the developer’s workflow. Modern development is shifting toward a model where the AI handles low-level syntax. The human engineers and architects manages high-level logic and security.

If you choose Marketing, dive into tools capable of predicting future trends. These platforms move you from general demographic targeting to individual-level behavioral forecasting in real-time. This creates your first deep anchor.

Once you feel steady, chunk up. Skim through news and articles about the overall space so you get a sense of the capabilities. Map the broader landscape—from vector databases to multimodal generation. Staying informed at this high level prevents you from getting blindsided by architectural shifts.

As you build that breadth, you chunk laterally. Then, when something comes up that would benefit greatly from an aspect other than your first specialty, you will recognize that your current focus isn’t the right one in that context. If you are deep in software development but hit a bottleneck in data quality, your broad map will point laterally toward data architecture. You will have a good idea what aspect is better suited. Then you can partner with someone that has that expertise, or learn it deeply yourself, or both.

Effective mastery requires building a foundation deep enough to create your mental anchor, while maintaining a wide enough perimeter to spot the right tools for the job. You cannot specialize into obsolescence and expect to stay relevant in a field that moves this fast. Whether you are ready for your first technical deep dive or you are currently gathering seeds for future growth, the only wrong move is standing still. Pick a starting point and get to work.

For your convenience (plus, I hate to throw away interesting artifacts that AI outputs when researching my articles), below are some areas (i.e., non-exhaustive) to consider when conceptualizing the Hierarchy of Ideas around AI.

AI Deep Dive Reference Table

| Specialization | Primary Focus | Practical Application |

| Software Development | AI-assisted coding and autonomous agents. | Tools handle boilerplate code and test generation. This shifts engineering cycles away from syntax and toward system architecture. |

| Marketing Campaigns | Predictive analytics and forecasting. | Systems are built for predicting future trends. Marketers deploy these models to adjust budgets preemptively rather than reacting to yesterday’s reports. |

| Prompt Engineering | Advanced linguistics and logic structures. | Mastery involves navigating how mental models assist in problem-solving. This discipline structures language strictly enough to force an LLM into predictable reasoning patterns. |

| Data Architecture | AI-ready data pipelines and vector databases. | Success requires establishing a comprehensive inventory of everything in your ecosystem. AI models hallucinate when fed fragmented data; clean pipelines act as the essential guardrail against garbage outputs. |

| Content Creation | Generative text, image, and video workflows. | AI enables executing multidisciplinary mental models for solving complex problems. Scaling content now relies on curating outputs against a strict brand voice, not writing from a blank page. |

| Business Intelligence | Pattern detection and anomaly resolution. | BI teams use AI to deploy real-time anomaly detection. This replaces static dashboards with active alerting systems that diagnose the drop in metrics before leadership even asks. |

© Scott S. Nelson